Yuchen's Microblog

2022-03-03 - The CC BY-NC-SA license is bad, don't use it

The CC BY-NC-SA license permits remix, but forbids commercial use (therefore nonfree). It is a weird frankenstein that combines copyleft with nonfree elements. As a result, works under this license will beget derived works, which will also be nonfree because of the ShareAlike (SA). It is a mistake by Creative Commons, which should have not designed any noncommercial licenses, or if it really wanted to, made noncommercial an optional clause of NoDerivative (ND) licenses.

Please do the public a favour and don't release your work under the NC-SA license. If you really do not want to allow commercial use, please use NC-ND instead to avoid nonfree derivatives.

2022-02-17 - dns66

For some reason, the Google public DNS seems to have become a default for many programs, including systemd. After installing Lineage OS, I noticed it also used Google public DNS as the default DNS for WiFi. To make things even worse, it was not changeable. I tried several ways documented on the web, including changing it in the WiFi setting, but none worked. In the end, I was able to fix it with an app called DNS66. If you face the same problem, give it a try.

2022-02-17 - Reading pdf or djvu in Emacs

DocView mode is a versitile builtin major mode in emacs, with the ability to display pdf, djvu, odt etc.

However, it is not really cut for the job for displaying large pdf or djvu. It can be slow, and may hang emacs when opening large djvu files.

If you want to view pdf, pdf-tools is a great alternative. It is much faster (I'd say as fast as external pdf viewers like zathura), and offers an outline mode style toc navigation. It also supports fast regexp isearch as well as occur. There's a nice demo which you can view with mpv, youtube-dl etc. http://www.dailymotion.com/video/x2bc1is_pdf-tools-tourdeforce_tech?forcedQuality%3Dhd720

For djvu, I recommend use the djvu mode instead. It does not make emacs hang, and allows quick switch between image and text.

2022-02-15 - Google analytics alternatives

Austrian and French regulators ruled against Google Analytics based on GDPR and data privacy. Another big problem with Google Analytics is that it is nonfree. There are free alternatives like Matomo (GPLv3+) and Plausible (AGPLv3+). I used to self host Matomo and it was quite decent, but I would like to try out self-hosted version of Plausible soon due to its simplicity and lightweight.

H/t Michael McMahon

2022-02-10 - Lineage OS helps you degoogle your phone

Compared to Replicant, Lineage OS is not fully free, as it contains kernel blobs and nonfree drivers. But if you are using stock android, switching to Lineage OS will be a massive improvement.

The best part: there will be no more proprietary Google apps and services spying on you. No google play, google maps, gmail, chrome, … You can ensure that all apps are free software by installing them from F-droid.

The stock apps that come with Lineage OS are nice too. There's the caffeine mode toggle switch in the status bar, which keep the screen on for 5, 10, 30 minutes, or indefinitely. I find this feature very useful when say I need to check the phone while cooking and I don't want it to go to lock screen. The bundled browser comes with an option to have all javascript disabled, fixing the Javascript Trap with a sledgehammer. By extension, apps with internal browsers like Materialistic Hacker news client will also not accidentally execute any Javascript.

It also seems like the phone battery lasts longer, which could be due to a more lightweight system.

The only things I'm missing are in the I/O department: glide typing and a Simplified Chinese input method. I used the proprietary Google keyboard on stock android that came with these features, but the input methods available on F-droid are missing them. I find myself typing less after moving to Lineage OS because I can't type as fast as I used to.

2022-02-10 - Mumble chirping when muted? Try toggling mute cue

Recently when I connect to a mumble server and mute myself, I hear a periodic chirp / beep once every two seconds or so. It turns out that I needed to deselect "mute cue" in settings, which seems to be doing the opposite of what it is supposed to do.

2022-02-10 - Big nonfree gotcha

I just came across my first big nonfree gotcha in formal verification.

CompCert, the verified C compiler, is nonfree.

And I learned there are no free alternatives.

And opam (the ocaml package manager) installed it without any warning, possibly as a dep of VST, used in Vol 5 of Software Foundations, Verifiable C.

But I also learned that the part of CompCert required for the study of Verifiable C is free. Someone should definitely extract out the free part which I hope can be distributed on opam as say "compcert-free".

On a side note, I feel the GNU Project is missing some essential functional packages, which could result in the proglang / formal verification community having less aspirations for free software. I am not faulting the GNU Project for this, of course. But by contrast, all Emacs packages, be it independent or part of the GNU ELPA, are by default licensed under GPLv3+, which could be caused by the GNU Project having a strong foothold in Emacs.

H/t Kiran G and pgiarrusso.

2022-02-03 - Reading EPUB in Emacs

nov.el is pretty handy as an EPUB reader in Emacs. Before that I was using the calibre reader, which is slow and resource hungry.

Emacs integration also means you get the following for free:

- Use follow-mode to allow double- or triple-page display. Note that you may need to remove the header line for the text continuation to be correct.

- Annotation can be done with org-capture, which can easily get the selected text and link to the EPUB with the correct position.

One feature that I am sorely missing from calibre reader is fulltext search, which is an item in the todo list of the project.

Another feature would be an equivalent to Info-index, which could allow jumping to any section in the book, with completion.

2022-01-27 - New Hampshire and Chile free software politics

News was that a New Hampshire bill to promote free software in government services (especially mandating online government services to be usable if nonfree javascript is blocked using tools like LibreJS) is on the table, and Chile is rewriting its constitution, with proposals to include free software values (Access to knowledge) and related digital rights values (Technological and digital sovereignty and Internet privacy).

2022-01-27 - EMMS and MPV

EMMS is a multimedia playlist management tool on emacs. It allows users to control the playback of audio, video and even images by interacting with external players like mpv and vlc using IPC.

I've been using EMMS exclusively with mpv, and the separation of media playlist management and playback, as well as moving playlist management to a purely plaintext environment make perfect sense.

What is a playlist, but a list of urls? As a simple experiment, you can write a working m3u file by hand, with each line the raw path to a media file. It will load in EMMS, neat.

What's also neat is mpv, a media player able to handle any kind of url you throw at it. Local files? Of course. Remote file urls over https? Yup. SFTP? You don't even need to sshfs mount, just do

mpv sftp://host:port/path/to/media.file. How about Libreplanet videos? It will work, through youtube-dl extractors, just dompv https://media.libreplanet.org/u/libreplanet/m/fsf-award-ceremony/. By the way, there's no mediagoblin extractor, but youtube-dl could find the media file using its rather versitile generic extractor.You can also create a playlist based on a youtube channel or playlist, with the help of some elisp plumbing calling

youtube-dl -j <playlist-or-channel-url> | jq '.webpage_url'and add the urls to a playlist. If you want, you can even bind a key to download a remote media piece you like!2022-01-27 - From mu4e to gnus

mu4e is a very popular emacs email client, known for the ease of setup.

However it has its problems. The search is not very good (for example I had a hard time searching for patterns with special symbols like subjects containg the string "[gnu.org #". The indexing is part of the program, which combined with its lack of concurrency, makes it rather tricky to schedule update its index (on a side note elfeed has a similar problem). One may need to perform some hack by killing any mu process running in emacs in a cron script before indexing. I had been doing manual indexing, and waiting for 30 seconds to index all the mails whenever I want to check the mailbox for update was rather distracting.

I made the move to gnus, which did not disappoint. Its search is more useful and natural - one does not have to worry about symbols. If gnus is configured with an imap server program like dovecot, indexing becomes that program's job, which could run as a cron job without bothering gnus. Since the imap server handles concurrency, one can even open up gnus in multiple emacs instance. As an added benefit, opening mailboxes is also much faster than mu4e.

As mentioned before, the popular RSS reader elfeed operates on a similar model as mu4e, thus lacking concurrency. In fact, it is even more limited, as if one runs elfeed on two emacs, the update in one does not reflect on the other! I hope there could be an emacs RSS reader with the simplicity of elfeed, but taking gnus approach, leaving fetching, indexing and storage to a (local) server program, while the reader itself simply acting as a local client.

2022-01-25 - User freedom on the web

The user freedom issues on the web are slightly complicated.

- Client-side: is code executed on the client machine (e.g. javascript)

free? If so then the user's freedom is protected.

- Then there's also the case when the client blocks the execution of nonfree javascript (e.g. by using LibreJS), in which case the user's freedom is still protected.

- There are also false positives when using LibreJS, when the javascript code is free, but not labelled or annoated in a LibreJS-compliant way. In this case, since the client code is free it is safe to whitelist the scripts.

- Server-side: is the server not under the user's (individual or

collective) control, doing computing on the user's behalf? If so

then that's bad (SaaSS), otherwise user freedom respecting.

- Examples of computing inherently one's own include translation, photo editing etc.

- Examples of computing not inherently one's own are generally activities requiring communication with others' computers, include accessing information published by others (e.g. reading a blog post) and publishing information (e.g. tweeting).

Case studies:

- Visiting the FSF homepage in a graphical browser like Firefox

- This is fine, because all Javascript is trivial or LibreJS compliant. Reading information published by the FSF is computing not inherently one's own, so it's not SaaSS hence freedom respecting.

- Tooting on Mastodon using its web client

- This is generally fine, as Mastodon webclient is free software, and some instances (like hostux.social) are LibreJS-compliant. Publishing microblogposts is a communication act, thus the Mastodon service that does so is not SasSS.

- Watching videos on Peertube using its webclient

- Even though Peertube is unusable with LibreJS on, it is free software from backend to frontend. Whitelisting is generally safe. Watching videos is again access information published by others, thus not SaaSS.

- Recaptcha

- Requires nonfree JS, bad.

- Watching YouTube videos on an invidious proxy

- similarly reading tweets on nitter, reading stuff on bibliogram or doing these activities using a free software client. This is certainly OK on the frontend as well as backend since it's communication.

- Routing on osmand

- Osmand is a free software client and all computation happens locally so it's good.

- Routing on osm.org

- It depends whether the routing calculation is done locally using free javascript programs, or remotely (SaaSS).

- Reading github issues

- Doable with LibreJS blocking all non-trivial nonfree javascript, and it is communications.

- Publishing tweets using free software clients

- Using free clients is fine on the client side, and publication counts as communication i.e. not SaaSS. This is what the FSF does.

- Get weather forecast

- Even though the forecast is done by computation on meteorological data, the user did not supply data, thus such computation does not count as SaaSS. It is similar to when someone does computation in their head (to outline, draft and revise) before publishing a blog post.

We can spot some trends from these case studies:

- Generally, a free software (not necessarily web) client is good. Many tools offer help with this, including the alternative frontends, haketilo and woot.tech.

- F-droid Non-Free Network Service antifeature is not consistent with the above method. In fact, it is not clear what is the definition of this antifeature. For example, free alternative frontends like NewPipe and Fritter are labelled with such antifeature, though by the analysis above these are fine.

- AGPL is mostly irrelevant in this discussion because it is mostly concerned with the freedom of the service provider, even though it is the best software license.

- It's OK freedom-wise to use GAFAM service as long as the client is free and the service does not count as SaaSS, though there are separate concerns like user privacy.

Further readings:

- Client-side: is code executed on the client machine (e.g. javascript)

free? If so then the user's freedom is protected.

2022-01-25 - lxo open letter

Alexandre Oliva (lxo) posted an email reply to a celebrated feminist leader regarding https://stallmansupport.org and the drama. Yet another powerful piece.

In other news, with the FSF expanding process to allow associate members to nominate new board members, I hope lxo can be back on the FSF Board.

2022-01-20 - Curry-Howard correspondence and Dependent types

What is Curry-Howard correspondence?

Roughly speaking, it says there is a one-one correspondence between world of propositions (proofs) and world of types (programs).

A simple illustration:

For any two propositions P and Q, the proposition that P implying Q, i.e. P -> Q, corresponds to: for any two types T and S, the functional type T -> S.

Finding a proof of P -> Q corresponds to finding an element in T -> S.

In a more simplified setting, proving a proposition P corresponds to finding an element of T.

In coq, one can write:

Theorem p_implies_q : P -> Q.

Which looks exactly the same as, except the keywords

TheoremandDefinition,Definition some_function : T -> S.

To show the pun is genuine, if you want, you can prove a definition and define a theorem:

Definition add_one : nat -> nat. Proof. intro n. (* introduce the argument of the function to the context and call it n. *) apply succ. (* apply succ to the "goal" *) apply n. Defined. Definition imp_refl : forall P : Prop, P -> P := fun P p : P => p.

It may still feel rather forced, as the proof of the definition is way less readable than a direct definition, and the definition of the theorem is just plain silly (Logical Foundations has a more reasonable example - the evenness of a number).

How about an actual example, where one cannot do without the correspondence? Well, look no further than dependent types!

Say you want to work with vectors, which are lists of a certain length, which is encoded as a pair (the list, the length) with a condition:

Definition vector (X : Type) := { '(xs, n) : list X * nat | length xs = n }.

This is called a dependent sum type, or sigma type, where the vertical bar

|serves the same role as in set theory, meaning "such that".The full definition is a bit more involved which we will skip.

Now, how do you define a concat function that takes two vectors of length m and n and returns a concatenated vector of length m + n? You will have to do two things in such a definition:

- Define the resulting pair.

- Prove the length of the resulting list is m + n.

Now there's no escaping the pun!

To learn more about this, check out Logical Foundations and Verified Functional Algorithms. More specifically, ProofObjects: The Curry-Howard Correspondence and ADT: Abstract Data Types.

2022-01-18 - The Djokovic case

Rules are rules the Prime Minister declared, especially when it comes to our borders. Rules weren't rules last year, when celebrities travelled to and from Australia while ordinary people were denied a permit to leave, or even more scandalously, not afforded the most basic right of a citizen to return to their country. Now though, when Australia chooses to insist on a medical procedure as a condition of entry to Australia the rules are suddenly rigid.

Meanwhile, asylum seekers beg Australians: ‘Don’t forget us when Djokovic leaves’, and PM accused of ‘outright lie’ after claiming detainees in Melbourne hotel are not refugees.

2022-01-18 - theyvoteforyou.org.au

theyvoteforyou.org.au looks like a very useful resource for democracy and shouldn't be shut down.

2022-01-18 - Free software vs. open source

Alexandre Oliva's post Against Software Tyranny is a good read on the difference between free software and open source. Free software is the liberation of computing, whereas open source is to hope for the corporations to be enlightened. Much like copyleft vs. pushover and dare I say progressives vs. liberals.

Corporations view "open source" software as commons, just like natural resources, free for their taking. This is the key cause of unsustainable free software development recently under discussion. This is also why I don't really like to refer to the free world as commons.

A commons is too weak to protect our computing freedom, and only makes sense if nonfree software is eliminated.

2022-01-18 - Warren, Jayapal Demand Google Stop Trying to 'Bully' DOJ Antitrust Official

Warren, Jayapal Demand Google Stop Trying to 'Bully' DOJ Antitrust Official

Google is too big and powerful and should be broken up. Everyone should read the antitrust filings against Google, easpecially the one led by the Texas AG. Another good read which the filing was apparently built on, with more comprehensive introduction of the relevant technologies is Why Google Dominates Advertising Markets by Srinivasan.

2022-01-13 - Why you should read Software Foundations

I finished reading the Logical Foundations, the first volume of Software Foundations. What an amazing book.

I learned about formal proofs before, by playing the natural number game designed by Kevin Buzzard, which is in Lean.

Logical Foundations covers all that and goes much deeper.

There are many great things about this book. You can download it, ignore the html files, and just burn through the coq .v files, which are actually the source of the webpages. The texts are basically comments in the .v files, but the readability is not worse than the html files, and actually much better in emacs. It is almost like literate programming.

As an aside, initially I had some problems with getting org-babel to work, but the files were deleted years ago. After reading LF I realised org-babel is not really important, since I can just happily work through the giant .v files in Proof General (which you can easily install manually without MELPA).

Another neat thing about LF is that it really demonstrates the parallel between propositions and types, by using the same arrow notations for implication and function, by making no notational distinctions with proofs of a theorem and elements of type. A Proof is a Definition, and a property of numbers is a dependent type. If you want to understand Curry-Howard Correspondence, you can't go wrong with Logical Foundations.

By the end of book, you will have worked through the implementation of a mini imperative language called Imp, and proved some simple properties of programs written in Imp - great value for your time!

2022-01-13 - A simple shell script to get Australian weather forecast from BoM

In this micropost I'll show how to write your own android weather app ("app" in a liberal sense) to retrieve weather for an Australian town, using Melbourne as an example.

The short form (précis) 7-day forecasts, containing min / max temperature, humidity level and chances of precipitation are available as xml files provided by the BoM - simply go there and get the link for the state you are interested in. For Victoria it is ftp://ftp.bom.gov.au/anon/gen/fwo/IDV10753.xml.

So the first step is to download the xml to a temp directory, which can be done with wget:

cd $PREFIX/tmp rm IDV10753.xml || true wget ftp://ftp.bom.gov.au/anon/gen/fwo/IDV10753.xml

Next one needs to query the xml file for the relevant data. Locate the city name, date, temperatures and chance of pricipitation using the nice xmlstarlet tool and format them nicely.

result=`xml sel -t -m '//area[@description="Melbourne"]/forecast-period'\ -v "substring-before(@start-time-local, 'T')" \ -o " - min: " -v "element[@type='air_temperature_minimum']" \ -o " - max: " -v "element[@type='air_temperature_maximum']" \ -o " - " -v "text[@type='precis']" \ -o " - chance of precipitation: " -v "text[@type='probability_of_precipitation']" \ -n IDV10753.xml`

And finally, send the forecast to yourself using Termux:API.

echo -e "7-day weather forecast in Melbourne:\n${result}" | \ termux-sms-send -n 044444444 # replace 044444444 with your phone number

To use it in termux, it doesn't hurt to specify the location of the shell in a shebang:

#!/data/data/com.termux/files/usr/bin/bash

Finally, to make the script a bit more convenient to invoke, use Termux:Widget, and copy the script to

~/.shortcut, and you can make it appear as a button in a desktop widget.Enjoy the Melbourne weather!

2022-01-11 - Virtual event organisers, please think of people in the APAC region

It is impossible to hold an online event that works for people from America, Europe and Asia-Pacific (APAC) regions at the same time. Normally one region will be dropped in favour of the other two. Most online tech events are organised by people located in Europe or America. 98% of them are set at a time that accommodates people in both regions, thus not work for people in APAC. Perhaps this is because not as many from the APAC region attend in an APAC-friendly time, compared to the number of participants from Europe in an Europe-friendly time (say) when the event is organised by someone in the States, which could be an effect as well, of events not accommodating APAC time.

As such, if organisers in Europe or America sometimes set events time to be APAC-friendly say 40% of the time, instead of accommodating those across the pond, a more diverse and vibrant community may result. More concretely, that roughly translates to 12am-12pm UTC for Europe-based organisers, 7pm-7am UTC-5 for US East Coast, and 3pm-3am for US West Coast.

Thank you!

2022-01-06 - Coq is cool

Having written emacs lisp for a while and grown my emacs init file to thousands of lines, I decided to revisit the Land of Lisp, a fanstastically and humorously written book about lisp.

The book said that lisp is basically lambda calculus, which got me thinking. How is it lambda calculus?

So I went back into the rabbit hole that drew me in a few years ago, not knowing that's where I was going. I started by refreshing my knowledge reading Types and Programming Languages (TAPL). After reading it I still didn't quite get how lisp is basically lambda calculus.

TAPL mentioned Curry-Howard correspondence, a theory that connects logic systems with type systems. I wanted to know what each of the 8 vertices of the lambda cube corresponds to and how, which was not covered in TAPL. After a failed web surfing session in an attempt to find quick answers to my question, I was reminded of the Software Foundations series, and indeed, it talked about Curry-Howard correspondence with real code.

So I went on to read the first volume titled Logic Foundations. Previously I had an (irrational?) aversion towards logic, fearing much of it was all dry tautology and not as fun as more "useful" mathematics like probably theory. Boy was I wrong. Logic Foundations introduces coq, which I didn't touch due to the same aversion. But as it turned out, coq answered most of my questions about formal mathematics, and fully developed my (unoriginal) ideas of formalising mathematics. Maths is code. Theorems are identified by their proofs. You can apply a theorem in the proof of another theorem, matching terms. You can parameterise theorems etc. etc. Coq is something I wish I knew when I was a PhD student. The logic system is constrained in CoC, calculus of constructions, which is the top vertex in the lambda cube. I am still reading the book and can't wait to find out the extent of mathematics covered by it and what can be done about non-constructive systems (like the classical maths where you can cheat with excluded middle) using coq or other formal systems.

If one day programs and proofs are indistinguishable, the two traditions will blend. Maths has no copyright, but advanced maths can be hard to understand, though written in well-commented code it will be more accessible. Computer programs are the opposite, heavily copyrighted under good free licenses like (A)GPLv3+ and evil proprietary licenses, but more accessible (though code obfuscation is also thing but it cannot have gaps). My hope is this will bring the best of both worlds, that is, an elimination of copyright in computer programs (look, it is all maths, and copyrighting theorems and proofs are absurd!), and a more accessible corpus of advanced mathematics.

2021-12-30 - Emacs is cool

Emacs blows Vim out of water.

I started using vim in late 2000s, perhaps 2010, as I was drawn by the marketing slogan with vim "what you think is what you get".

I tried to get vi keybinding on everything, in browser (vimperator), pdf viewer (zathura), window manager (i3) etc. I used vimwiki heavily for knowledge and task management, and even wrote the initial version of the pandoc vimwiki reader.

About 18 months ago (around end of June 2020) I decided to give Emacs a try. The reason for this decision was more ideological than technical - I was fed up with a free software hostile environment, and Emacs always striked me as a centre piece of the GNU project.

I started the official Emacs distribution with an empty config file, and read the emacs tutorial. Coming from vim, it was quite uncomfortable to go without the convenient keys from hjkl for basic navigation to C-d for scrolling down half page and . for repeating the last action.

Org mode came as a double culture shock for someone used to vimwiki. Why would anyone think having a level-8 heading is a good idea? The link format was also a bit more verbose. Online resources focused more on GTD workflow than describing the markup syntax. And the table auto-align was nothing fancy - we have that in vimwiki.

But soon enough, I found out Emacs is indeed way better than vim. It can be used for almost about every computing task, including web browsing, emails, media player, executing shell commands, reading epub, managing files, version control, and of course, text editing. Days without emacs now seemed like dark ages.

Some aha moments in my Emacs journey:

- When I discovered icomplete and fido, completion and function / variable discovery became so much easier.

- When I combined icomplete with (setq org-refile-use-outline-path t), (setq org-refile-use-cache t), and (setq org-outline-path-complete-in-steps nil), which allows me to jump to any entry in a huge org file within seconds.

- When I learned about emacsclient, so that I can have a long running emacs server so that I can share states across multiple emacs frames (or "windows" in a DE / WM context), and I don't lose anything when accidentally typing C-x C-c and quitting emacs.

- When I found out EMMS to be the only media player with persistent playlists that I can switch and control with ease, and with the help of mpv, it can play urls in multiple schemes from http to sftp, and with the help you youtube-dl, it can play mediagoblin webpages, which allowed me to go through talks at https://audio-video.gnu.org/video/ and https://media.libreplanet.org/videos without losing track.

- When I read the GTD book, despite not having a secretary to bring me the tickler folder or a koi pond to for me pave around with a wine glass in hand, I could finally put the design of org mode in context and vastly improve my workflow by implementing my version of GTD.

- When I switched from webmail to mu4e, I learned how to get mails as a client and that emails are basically plaintext files (e.g. maildir) which can be read and written to locally and synced to remote server, and that smtp and imap are completely separate areas of concern; when I switched from mu4e to gnus, I learned how to serve mails using dovecot as an imap server and talk to a mail server using telnet, as well as the nice thing about offloading indexing to an external party (updating mails in gnus is instant, compared to mu4e-update-index).

The most useful tool, the killer feature for me, is of course org mode. I spent most of my emacs screen time in org mode. I can't think of any aha moments related to it, but the process of adoption was gradual and there are so many nice features. I approached org mode with starting using one new feature every few weeks: speed command, org agenda, links, properties, org capture, effort estimate, clocking, tagging, refiling, attachment, online image display, citing… The problem with marketing org mode, and emacs in general, is that it integrates so much in my life and its workflow is so involved, that it is rather difficult to come up with a quick demo to impress people.

One final point is that my usage is pretty vanilla, in that I strongly prefer the core distribution and GNU ELPA. I also installed a few packages from NonGNU ELPA, but I don't use MELPA at all, both for ideological reasons and simplicity. In the rare occasions when I really need a package not in core / NonGNU ELPA, I normally install it manually with make / add-to-list load-path and require / autoload.

Enough rambling…

2021-12-23 - Curve25519

A tour of Curve25519 is a great introduction on elliptic curve encryption. It explains how EC is like modular arithmetic, with the analogue what multiplication to EC is what exponentiation to modular arithmetic.

2021-12-23 - Theory of Bitcoin

The theoretical model of bitcoin is surprisingly simple. A transaction is a list of inputs and outputs, where the inputs trace to outputs of previous transactions. Transactions form blocks, and blocks form the blockchain with each block verifying the previous ones, going all the way back to the genesis block. Proof of work requires finding a nonce that hashes to a sufficiently small number. One new block every 10 minutes, transaction fees and award of 6.25BTC (halving every 210k blocks) goes to whoever created the block (aka miner). A total of 21mil BTC, running out by ~2140.

By the way, the whitepaper is not very useful for understanding the theory of bitcoin, but Wikipedia and the bitcoin wiki are far better resources IMO.

2021-12-09 - Ombudsman finds Victoria border permit system 'unjust'

Link. Not the first time the Ombudsman has such findings about pandemic measures taken by the Victorian Government.

2021-12-08 - EmacsConf 2021 alternate streaming solution

LibreAustralia hosted an alternate EmacsConf 2021 stream for APAC timezones on 28th November. It was a fun event to organise.

How to stream an event like this with a fully free software stack? Initially I envisioned a server streaming solution like I did with the inaugural event, by using ffmpeg to feed a local video file to icecast:

ffmpeg -re -i ./video.webm -codec copy -content_type video/webm icecast://source:password@localhost:8000/live.webm

This works very well with one video, but with multiple videos one will need to concatenate them. The concat idea has two problems:

- Not all videos can be concatenated. In fact, in most of my experiments, the output video could not play after the the portion corresponding to the first input video.

- There's absolutely no control of the playback. Once the stream started, the whole event is scripted, and to adjust the schedule one has to kill the stream first.

Problem 2 can be fixed by utilising the fallback mountpoint with fallback-override:

<mount> <mount-name>/live.webm</mount-name> <fallback-mount>/fallback.webm</fallback-mount> <fallback-override>1</fallback-override> </mount>

This way the stream never dies, provided a standby video plays on on the fallback mountpoint.

Unfortunately not all videos can move smoothly between the main and the fallback mountpoints. Some transitions cause unpleasant visual artefacts lasting for a dozen seconds, others (even worse) with audio turning into high-pitch scratching noise and never recovering. For certain videos these problems even occur when a video transitions to itself.

It may be possible to use ffmpeg to reencode videos that transitions smoothly, which is something to figure out for the future.

That's pretty much a deadend in server streaming.

On to desktop streaming, which offers the ultimate flexibility of playback control, but is heavier on bandwidth and computing resources. One idea was OBS Studio, which unfortunately does not have icecast as one of its streaming options, but rather requires a hack to recording to an icecast mountpoint.

I experimented with a setup from Amin Bandali, which seems to me like using OBS Studio as an ffmpeg wrapper. Unfortunately I would get segfault unless the stream is done with a minimal resolution.

Inspired by LibreMiami's watch party, I decided to try out Owncast. It was extremely easy to set up, and I could get an acceptable streaming performance with some low settings.

However, as pointed out by Amin, owncast uses rtmp as the streaming protocol, which probably encodes to mp4, a patent encumbered format.

How about streaming to BBB with screen share + monitor system audio as source? A test with Leo Vivier showed that it has a similar performance to owncast. The downside with BBB is that it requires javascript and is less accssible than icecast for viewers.

What worked, in the end, was an direct ffmpeg to icecast streaming (thanks to Sacha Chua):

ffmpeg -loglevel 0 -ar 48000 -i default -re -video_size 1280x720 -framerate 25 -f x11grab -i :0.0+0,20 -cluster_size_limit 2M -cluster_time_limit 5100 -content_type video/webm -c:v libvpx -b:v 1M -crf 30 -g 125 -deadline good -threads 4 -f webm icecast://source:pass@host:8000/live.webm

The captured area is shifted by 20 pixels in order not to grab the title bar of the player and emacs window.

The performance of this approach was better than any of the other desktop streaming solutions, probably due to its bare ffmpeg setup without any bells and whistles.

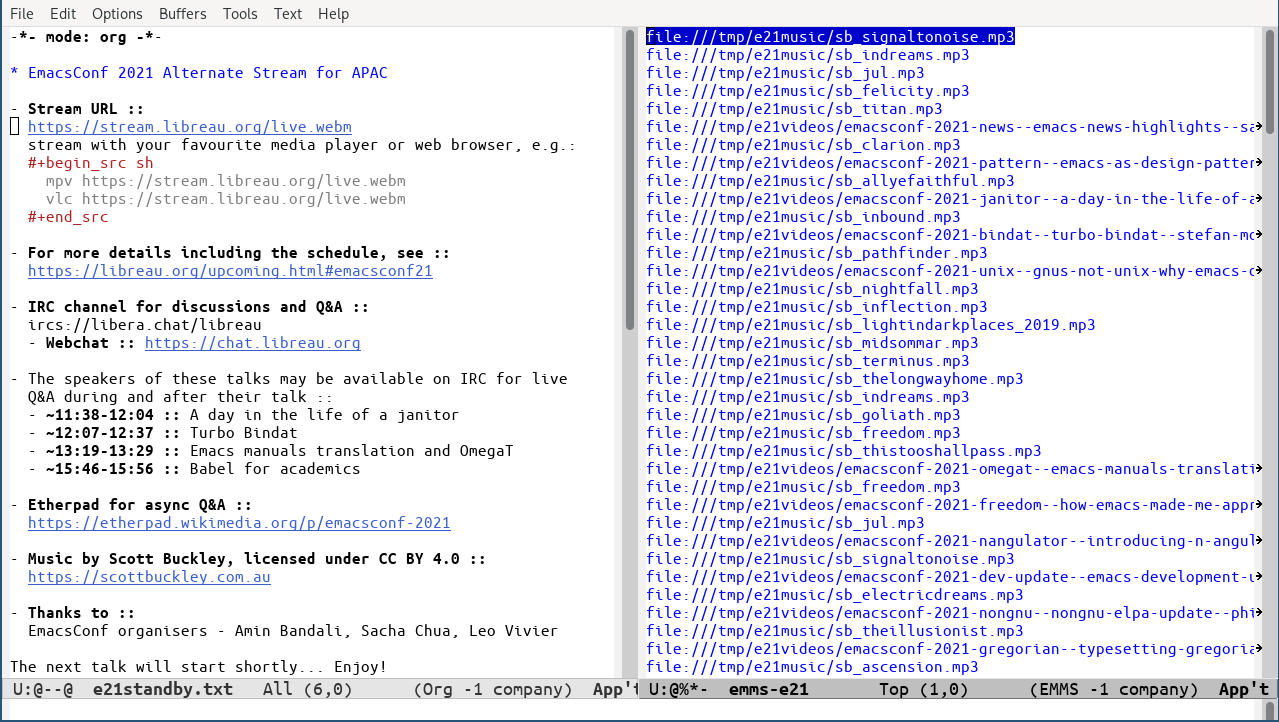

I also used an EMMS playlist to interlace the talk videos with standby music tracks. If the buffer times between talks were not so short, the whole event could have been autopiloted with elisp

run-at-time!

Figure 1: Standby emacs session during the stream

2021-12-06 - The useful GPL "or later" clause

Ariadne Conill wrote a piece on GPL "or later" clause. I made a comment about two weeks ago, which was under moderation but has not appeared as of today. So I decided to publish it below (with some minor edits).

The article says:

The primary motive for the version upgrade clause, at the time, was quite simple: the concept of using copyright to enforce software freedom, was, at the time, a new and novel concept, and there was a concern that the license might have flaws or need clarifications.

The main purpose of the -or-later clause is compatibility. Any two (strong) copyleft licenses are incompatible. If a program is licensed under GPLv2-only, it is incompatible with GPLv3. Same goes for version 3: a GPLv3'd program will likely not be combinable with future GPLv4'd programs.

The article continues:

However, for all of the success of the GPLv3 drafting process, it must be noted that the GPL is ultimately published by the Free Software Foundation, an organization that many have questioned the long-term viability of lately.

What long-term viability? According to https://www.fsf.org/news/fsf-board-frequently-asked-questions-faq#FSFfinancialstatus:

The FSF is in good financial health. As is the case with many organizations, the pandemic affected the FSF, impacting donors, making it impossible to host or attend in-person events, and disrupting operations. Fortunately, conservative financial planning over the years provided the FSF with sufficient reserves to weather these difficulties.

The rating organization Charity Navigator recently gave the FSF its 8th consecutive 4-star rating and, for the first time ever, a perfect overall score: https://www.fsf.org/news/free-software-foundation-awarded-perfect-score-from-charity-navigator-plus-eighth-consecutive-four-star-rating.

The FSF does not depend on large single sources of funding. It accepts and appreciates support from corporations who want to give back by contributing to the development and advocacy for free software, but direct corporate support accounted for less than 3% of FSF revenue in its most recently audited fiscal year.

The vast majority of FSF’s financial support comes from individuals – many, but not all, of whom choose to become associate members. At this moment, the FSF has more associate members than at any time in its history.

The original article continues:

And this is ultimately the problem: what happens if the FSF shuts down, and has to liquidate? What if an intellectual property troll acquires the GNU copyright assignments, or acquires the trademark rights to the FSF name, and publishes a new GPL version? There are many possibilities to be concerned about, but developers can do two things to mitigate the damage.

It is baked into GPL terms that future versions of the license have to be similar to the current version in spirit, see Section 14 of GPLv3 text, which protects GPL from the FSF:

The Free Software Foundation may publish revised and/or new versions of the GNU General Public License from time to time. Such new versions will be similar in spirit to the present version, but may differ in detail to address new problems or concerns.

On the other hand, GPLv3-or-later, as its name implies, offers a choice. The recipient of a program under this license can choose to apply GPLv3, or a future version e.g. GPLv4, and if the future version is bad all is not lost:

https://www.gnu.org/licenses/gpl-faq.html#VersionThreeOrLater

Suppose a program says “Version 3 of the GPL or any later version” and a new version of the GPL is released. If the new GPL version gives additional permission, that permission will be available immediately to all the users of the program. But if the new GPL version has a tighter requirement, it will not restrict use of the current version of the program, because it can still be used under GPL version 3. When a program says “Version 3 of the GPL or any later version”, users will always be permitted to use it, and even change it, according to the terms of GPL version 3—even after later versions of the GPL are available.

If a tighter requirement in a new version of the GPL need not be obeyed for existing software, how is it useful? Once GPL version 4 is available, the developers of most GPL-covered programs will release subsequent versions of their programs specifying “Version 4 of the GPL or any later version”. Then users will have to follow the tighter requirements in GPL version 4, for subsequent versions of the program.

However, developers are not obligated to do this; developers can continue allowing use of the previous version of the GPL, if that is their preference.

Continues on the original article:

First, they can stop using the “or later” clause in new GPL-licensed code.

This is a bad idea and likely harmful to the free software movement, because programs licensed under newer GPL will not be compatible with programs licensed under GPLv3-only.

Second, they can stop assigning copyright to the FSF. In the event that the FSF becomes compromised, for example, by an intellectual property troll, this limits the scope of their possible war chest for malicious GPL enforcement litigation. As we have learned from the McHardy cases involving Netfilter, in a project with multiple copyright holders, effective GPL enforcement litigation is most effective when done as a class action. In this way, dilution of the FSF copyright assignment pool protects the commons over time from exposure to malicious litigation by a compromised FSF.

The copyright assignment enables the FSF as the copyright holder to enforce GPL effectively.

The assignment contract safeguards the future of assigned work https://www.fsf.org/bulletin/2014/spring/copyright-assignment-at-the-fsf:

But the most important element of the assignment contract is the promise we make to every contributor and community member: We promise to always keep the software free. This promise extends to any successors in the copyright, meaning that even if the FSF were to go away the freedom of all users to share in the contributions wouldn't.

Finally, note there is a difference between Creative Commons licenses and GPL regarding the -or-later variants. GPL offers people the choice to use -only or -or-later, though FSF recommends the latter. Contrast that with Creative Commons licenses where -or-later is built-in, and the recipient has no choice.

2021-07-14 - Support Richard M. Stallman

Finally I found some time to write about this.

In LibrePlanet 2021 in late March, Richard Stallman announced in his talk that he was returning to the Board of Directors of the Free Software Foundation. This was after he was forced to resign by a smear campaign in September 2019.

It was great news! It was a relief and like some kind of belated justice for him.

The events took a dark turn soon after. An "open letter" labelling Stallman many things he is not gained support from a group of established people in the "open source" community, and organisations in the same community, including Creative Commons, Mozilla, The Tor Project and Framasoft. The letter called for the removal of Stallman from his life's work, and cited defamatory materials as evidence.

Other organisations also joined in, including Software Freedom Conservancy, Free Software Foundation Europe and Electronic Frontier Foundation and published letters condemning the imaginary crimes committed by Stallman and issued sanctions against him and the FSF.

These groups and people refused to engage in discussions and some outright censored disagreements on this matter.

It was a religious inquisition, a lynch mob, and a textbook case of ritual defamation. It damaged the free software movement and harmed the Free Software Foundation. The management team resigned, leaving the organisation in a bad shape, which was likely capitalised by opportunists in the GCC Steering Committee to remove Stallman, and remove the copyright assignment requirement from the project without community consultation, which further set a precedence and other GNU projects were planning on a similar move. The dilution of copyright will make GPL enforcement harder for these projects.

I condemn the defamatory attacks and support Richard M. Stallman and I hope you join me. Please see also https://stallmansupport.org.

P.S. Alexandre Oliva wrote a very insightful piece on the drama.

2020-08-02 - ia lawsuit

The four big publishers Hachette, HarperCollins, Wiley, and Penguin Random House are still pursuing Internet Archive.

[Their] lawsuit does not stop at seeking to end the practice of Controlled Digital Lending. These publishers call for the destruction of the 1.5 million digital books that Internet Archive makes available to our patrons. This form of digital book burning is unprecedented and unfairly disadvantages people with print disabilities. For the blind, ebooks are a lifeline, yet less than one in ten exists in accessible formats. Since 2010, Internet Archive has made our lending library available to the blind and print disabled community, in addition to sighted users. If the publishers are successful with their lawsuit, more than a million of those books would be deleted from the Internet's digital shelves forever.

2020-08-02 - fsf-membership

I am a proud associate member of Free Software Freedom. For me the philosophy of Free Software is about ensuring the enrichment of a digital commons, so that knowledge and information are not concentrated in the hands of selected privileged people and locked up as "intellectual property". The genius of copyleft licenses like GNU (A)GPL ensures software released for the public, remains public. Open source does not care about that.

If you also care about the public good, the hacker ethics, or the spirit of the web, please take a moment to consider joining FSF as an associate member. It comes with numerous perks and benefits.

2020-06-21 - how-can-you-help-ia

How can you help the Internet Archive? Use it. It's more than the Wayback Machine. And get involved.

2020-06-12 - open-library

Open Library was cofounded by Aaron Swartz. As part of the Internet Archive, it has done good work to spread knowledge. However it is currently being sued by four major publishers for the National Emergency Library. IA decided to close the NEL two weeks earlier than planned, but the lawsuit is not over, which in the worst case scenario has the danger of resulting in Controlled Digital Lending being considered illegal and (less likely) bancruptcy of the Internet Archive. If this happens it will be a big setback of the free-culture movement.

2020-04-15 - sanders-suspend-campaign

Suspending the campaign is different from dropping out of the race. Bernie Sanders remains on the ballot, and indeed in his campaign suspension speech he encouraged people to continue voting for him in the democratic primaries to push for changes in the convention.

2019-09-30 - defense-stallman

Someone wrote a bold article titled "In Defense of Richard Stallman". Kudos to him.

Also, an interesting read: Famous public figure in tech suffers the consequences for asshole-ish behavior.

2019-09-29 - stallman-resign

Last week Richard Stallman resigned from FSF. It is a great loss for the free software movement.

The apparent cause of his resignation and the events that triggered it reflect some alarming trends of the zeitgeist. Here is a detailed review of what happened: Low grade "journalists" and internet mob attack RMS with lies. In-depth review.. Some interesting articles on this are: Weekly Roundup: The Passion Of Saint iGNUcius Edition, Why I Once Called for Richard Stallman to Step Down.

Dishonest and misleading media pieces involved in this incident include The Daily Beast, Vice, Tech Crunch, Wired.

2019-03-16 - decss-haiku

Muse! When we learned to count, little did we know all the things we could do some day by shuffling those numbers: Pythagoras said "All is number" long before he saw computers and their effects, or what they could do by computation, naive and mechanical fast arithmetic. It changed the world, it changed our consciousness and lives to have such fast math available to us and anyone who cared to learn programming. Now help me, Muse, for I wish to tell a piece of controversial math, for which the lawyers of DVD CCA don't forbear to sue: that they alone should know or have the right to teach these skills and these rules. (Do they understand the content, or is it just the effects they see?) And all mathematics is full of stories (just read Eric Temple Bell); and CSS is no exception to this rule. Sing, Muse, decryption once secret, as all knowledge, once unknown: how to decrypt DVDs.

Seth Schoen, DeCSS haiku

2019-01-27 - learning-undecidable

My take on the Nature paper Learning can be undecidable:

Fantastic article, very clearly written.

So it reduces a kind of learninability called estimating the maximum (EMX) to the cardinality of real numbers which is undecidable.

When it comes to the relation between EMX and the rest of machine learning framework, the article mentions that EMX belongs to "extensions of PAC learnability include Vapnik's statistical learning setting and the equivalent general learning setting by Shalev-Shwartz and colleagues" (I have no idea what these two things are), but it does not say whether EMX is representative of or reduces to common learning tasks. So it is not clear whether its undecidability applies to ML at large.

Another condition to the main theorem is the union bounded closure assumption. It seems a reasonable property of a family of sets, but then again I wonder how that translates to learning.

The article says "By now, we know of quite a few independence [from mathematical axioms] results, mostly for set theoretic questions like the continuum hypothesis, but also for results in algebra, analysis, infinite combinatorics and more. Machine learning, so far, has escaped this fate." but the description of the EMX learnability makes it more like a classical mathematical / theoretical computer science problem rather than machine learning.

An insightful conclusion: "How come learnability can neither be proved nor refuted? A closer look reveals that the source of the problem is in defining learnability as the existence of a learning function rather than the existence of a learning algorithm. In contrast with the existence of algorithms, the existence of functions over infinite domains is a (logically) subtle issue."

In relation to practical problems, it uses an example of ad targeting. However, A lot is lost in translation from the main theorem to this ad example.

The EMX problem states: given a domain X, a distribution P over X which is unknown, some samples from P, and a family of subsets of X called F, find A in F that approximately maximises P(A).

The undecidability rests on X being the continuous [0, 1] interval, and from the insight, we know the problem comes from the cardinality of subsets of the [0, 1] interval, which is "logically subtle".

In the ad problem, the domain X is all potential visitors, which is finite because there are finite number of people in the world. In this case P is a categorical distribution over the 1..n where n is the population of the world. One can have a good estimate of the parameters of a categorical distribution by asking for sufficiently large number of samples and computing the empirical distribution. Let's call the estimated distribution Q. One can choose the from F (also finite) the set that maximises Q(A) which will be a solution to EMX.

In other words, the theorem states: EMX is undecidable because not all EMX instances are decidable, because there are some nasty ones due to infinities. That does not mean no EMX instance is decidable. And I think the ad instance is decidable. Is there a learning task that actually corresponds to an undecidable EMX instance? I don't know, but I will not believe the result of this paper is useful until I see one.

h/t Reynaldo Boulogne

2018-12-11 - gavin-belson

I don't know about you people, but I don't want to live in a world where someone else makes the world a better place better than we do.

Gavin Belson, Silicon Valley S2E1.

I came across this quote in a Slate post about Facebook

2018-10-05 - margins

With Fermat's Library's new tool margins, you can host your own journal club.

2018-09-18 - rnn-turing

Just some non-rigorous guess / thought: Feedforward networks are like combinatorial logic, and recurrent networks are like sequential logic (e.g. data flip-flop is like the feedback connection in RNN). Since NAND

- combinatorial logic + sequential logic = von Neumann machine which is

an approximation of the Turing machine, it is not surprising that RNN (with feedforward networks) is Turing complete (assuming that neural networks can learn the NAND gate).

2018-09-07 - zitierkartell

2018-09-05 - short-science

- ShortScience.org is a platform for post-publication discussion aiming to improve accessibility and reproducibility of research ideas.

- The website has over 800 summaries, mostly in machine learning, written by the community and organized by paper, conference, and year.

- Reading summaries of papers is useful to obtain the perspective and insight of another reader, why they liked or disliked it, and their attempt to demystify complicated sections.

- Also, writing summaries is a good exercise to understand the content of a paper because you are forced to challenge your assumptions when explaining it.

- Finally, you can keep up to date with the flood of research by reading the latest summaries on our Twitter and Facebook pages.

2018-08-13 - darknet-diaries

Darknet Diaries is a cool podcast. According to its about page it covers "true stories from the dark side of the Internet. Stories about hackers, defenders, threats, malware, botnets, breaches, and privacy."

2018-06-20 - coursera-basic-income

Coursera is having a Teach-Out on Basic Income.

2018-06-19 - pun-generator

2018-06-15 - hackers-excerpt

But as more nontechnical people bought computers, the things that impressed hackers were not as essential. While the programs themselves had to maintain a certain standard of quality, it was quite possible that the most exacting standards—those applied by a hacker who wanted to add one more feature, or wouldn't let go of a project until it was demonstrably faster than anything else around—were probably counterproductive. What seemed more important was marketing. There were plenty of brilliant programs which no one knew about. Sometimes hackers would write programs and put them in the public domain, give them away as easily as John Harris had lent his early copy of Jawbreaker to the guys at the Fresno computer store. But rarely would people ask for public domain programs by name: they wanted the ones they saw advertised and discussed in magazines, demonstrated in computer stores. It was not so important to have amazingly clever algorithms. Users would put up with more commonplace ones.

The Hacker Ethic, of course, held that every program should be as good as you could make it (or better), infinitely flexible, admired for its brilliance of concept and execution, and designed to extend the user's powers. Selling computer programs like toothpaste was heresy. But it was happening. Consider the prescription for success offered by one of a panel of high-tech venture capitalists, gathered at a 1982 software show: "I can summarize what it takes in three words: marketing, marketing, marketing." When computers are sold like toasters, programs will be sold like toothpaste. The Hacker Ethic notwithstanding.

Hackers: Heroes of Computer Revolution, by Steven Levy.

2018-06-11 - catalan-overflow

To compute Catalan numbers without unnecessary overflow, use the recurrence formula \(C_n = {4 n - 2 \over n + 1} C_{n - 1}\).

2018-06-04 - boyer-moore

The Boyer-Moore algorithm for finding the majority of a sequence of elements falls in the category of "very clever algorithms".

int majorityElement(vector<int>& xs) { int count = 0; int maj = xs[0]; for (auto x : xs) { if (x == maj) count++; else if (count == 0) maj = x; else count--; } return maj; }

2018-05-30 - how-to-learn-on-your-own

Roger Grosse's post How to learn on your own (2015) is an excellent modern guide on how to learn and research technical stuff (especially machine learning and maths) on one's own.

2018-05-25 - 2048-mdp

This post models 2048 as an MDP and solves it using policy iteration and backward induction.

2018-05-22 - ats

ATS (Applied Type System) is a programming language designed to unify programming with formal specification. ATS has support for combining theorem proving with practical programming through the use of advanced type systems. A past version of The Computer Language Benchmarks Game has demonstrated that the performance of ATS is comparable to that of the C and C++ programming languages. By using theorem proving and strict type checking, the compiler can detect and prove that its implemented functions are not susceptible to bugs such as division by zero, memory leaks, buffer overflow, and other forms of memory corruption by verifying pointer arithmetic and reference counting before the program compiles. Additionally, by using the integrated theorem-proving system of ATS (ATS/LF), the programmer may make use of static constructs that are intertwined with the operative code to prove that a function attains its specification.

2018-05-20 - bostoncalling

(5-second fame) I sent a picture of my kitchen sink to BBC and got mentioned in the latest Boston Calling episode (listen at 25:54).

2018-05-18 - colah-blog

colah's blog has a cool feature that allows you to comment on any paragraph of a blog post. Here's an example. If it is doable on a static site hosted on Github pages, I suppose it shouldn't be too hard to implement. This also seems to work more seamlessly than Fermat's Library, because the latter has to embed pdfs in webpages. Now fantasy time: imagine that one day arXiv shows html versions of papers (through author uploading or conversion from TeX) with this feature.

2018-05-15 - random-forests

Stanford Lagunita's statistical learning course has some excellent lectures on random forests. It starts with explanations of decision trees, followed by bagged trees and random forests, and ends with boosting. From these lectures it seems that:

- The term "predictors" in statistical learning = "features" in machine learning.

- The main idea of random forests of dropping predictors for individual trees and aggregate by majority or average is the same as the idea of dropout in neural networks, where a proportion of neurons in the hidden layers are dropped temporarily during different minibatches of training, effectively averaging over an emsemble of subnetworks. Both tricks are used as regularisations, i.e. to reduce the variance. The only difference is: in random forests, all but a square root number of the total number of features are dropped, whereas the dropout ratio in neural networks is usually a half.

By the way, here's a comparison between statistical learning and machine learning from the slides of the Statistcal Learning course:

2018-05-14 - open-review-net

Open peer review means peer review process where communications e.g. comments and responses are public.

Like SciPost mentioned in my post, OpenReview.net is an example of open peer review in research. It looks like their focus is machine learning. Their about page states their mission, and here's an example where you can click on each entry to see what it is like. We definitely need this in the maths research community.

2018-05-11 - rnn-fsm

Related to a previous micropost.

These slides from Toronto are a nice introduction to RNN (recurrent neural network) from a computational point of view. It states that RNN can simulate any FSM (finite state machine, a.k.a. finite automata abbr. FA) with a toy example computing the parity of a binary string.

Goodfellow et. al.'s book (see page 372 and 374) goes one step further, stating that RNN with a hidden-to-hidden layer can simulate Turing machines, and not only that, but also the universal Turing machine abbr. UTM (the book referenced Siegelmann-Sontag), a property not shared by the weaker network where the hidden-to-hidden layer is replaced by an output-to-hidden layer (page 376).

By the way, the RNN with a hidden-to-hidden layer has the same architecture as the so-called linear dynamical system mentioned in Hinton's video.

From what I have learned, the universality of RNN and feedforward networks are therefore due to different arguments, the former coming from Turing machines and the latter from an analytical view of approximation by step functions.

2018-05-10 - math-writing-decoupling

One way to write readable mathematics is to decouple concepts. One idea is the following template. First write a toy example with all the important components present in this example, then analyse each component individually and elaborate how (perhaps more complex) variations of the component can extend the toy example and induce more complex or powerful versions of the toy example. Through such incremental development, one should be able to arrive at any result in cutting edge research after a pleasant journey.

It's a bit like the UNIX philosophy, where you have a basic system of modules like IO, memory management, graphics etc, and modify / improve each module individually (H/t NAND2Tetris).

The book Neutral networks and deep learning by Michael Nielsen is an example of such approach. It begins the journey with a very simple neutral net with one hidden layer, no regularisation, and sigmoid activations. It then analyses each component including cost functions, the back propagation algorithm, the activation functions, regularisation and the overall architecture (from fully connected to CNN) individually and improve the toy example incrementally. Over the course the accuracy of the example of mnist grows incrementally from 95.42% to 99.67%.

2018-05-09 - neural-turing-machine

One way RNNs are currently being used is to connect neural networks more closely to traditional ways of thinking about algorithms, ways of thinking based on concepts such as Turing machines and (conventional) programming languages. A 2014 paper developed an RNN which could take as input a character-by-character description of a (very, very simple!) Python program, and use that description to predict the output. Informally, the network is learning to "understand" certain Python programs. A second paper, also from 2014, used RNNs as a starting point to develop what they called a neural Turing machine (NTM). This is a universal computer whose entire structure can be trained using gradient descent. They trained their NTM to infer algorithms for several simple problems, such as sorting and copying.

As it stands, these are extremely simple toy models. Learning to execute the Python program

print(398345+42598)doesn't make a network into a full-fledged Python interpreter! It's not clear how much further it will be possible to push the ideas. Still, the results are intriguing. Historically, neural networks have done well at pattern recognition problems where conventional algorithmic approaches have trouble. Vice versa, conventional algorithmic approaches are good at solving problems that neural nets aren't so good at. No-one today implements a web server or a database program using a neural network! It'd be great to develop unified models that integrate the strengths of both neural networks and more traditional approaches to algorithms. RNNs and ideas inspired by RNNs may help us do that.Michael Nielsen, Neural networks and deep learning

2018-05-09 - neural-nets-activation

What makes the rectified linear activation function better than the sigmoid or tanh functions? At present, we have a poor understanding of the answer to this question. Indeed, rectified linear units have only begun to be widely used in the past few years. The reason for that recent adoption is empirical: a few people tried rectified linear units, often on the basis of hunches or heuristic arguments. They got good results classifying benchmark data sets, and the practice has spread. In an ideal world we'd have a theory telling us which activation function to pick for which application. But at present we're a long way from such a world. I should not be at all surprised if further major improvements can be obtained by an even better choice of activation function. And I also expect that in coming decades a powerful theory of activation functions will be developed. Today, we still have to rely on poorly understood rules of thumb and experience.

Michael Nielsen, Neutral networks and deep learning

2018-05-08 - nlp-arxiv

Primer Science is a tool by a startup called Primer that uses NLP to summarize contents (but not single papers, yet) on arxiv. A developer of this tool predicts in an interview that progress on AI's ability to extract meanings from AI research papers will be the biggest accelerant on AI research.

2018-05-08 - sql-injection-video

Computerphile has some brilliant educational videos on computer science, like a demo of SQL injection, a toy example of the lambda calculus, and explaining the Y combinator.

2018-05-08 - neural-nets-regularization

no-one has yet developed an entirely convincing theoretical explanation for why regularization helps networks generalize. Indeed, researchers continue to write papers where they try different approaches to regularization, compare them to see which works better, and attempt to understand why different approaches work better or worse. And so you can view regularization as something of a kludge. While it often helps, we don't have an entirely satisfactory systematic understanding of what's going on, merely incomplete heuristics and rules of thumb.

There's a deeper set of issues here, issues which go to the heart of science. It's the question of how we generalize. Regularization may give us a computational magic wand that helps our networks generalize better, but it doesn't give us a principled understanding of how generalization works, nor of what the best approach is.

Michael Nielsen, Neural networks and deep learning

2018-05-07 - learning-knowledge-graph-reddit-journal-club

It is a natural idea to look for ways to learn things like going through a skill tree in a computer RPG.

For example I made a DAG for juggling.

Websites like Knowen and Metacademy explore this idea with added flavour of open collaboration.

The design of Metacademy looks quite promising. It also has a nice tagline: "your package manager for knowledge".

There are so so many tools to assist learning / research / knowledge sharing today, and we should keep experimenting, in the hope that eventually one of them will scale.

On another note, I often complain about the lack of a place to discuss math research online, but today I found on Reddit some journal clubs on machine learning: 1, 2. If only we had this for maths. On the other hand r/math does have some interesting recurring threads as well: Everything about X and What Are You Working On?. Hopefully these threads can last for years to come.

2018-05-02 - simple-solution-lack-of-math-rendering

The lack of maths rendering in major online communication platforms like instant messaging, email or Github has been a minor obsession of mine for quite a while, as I saw it as a big factor preventing people from talking more maths online. But today I realised this is totally a non-issue. Just do what people on IRC have been doing since the inception of the universe: use a (latex) pastebin.

2018-05-01 - neural-networks-programming-paradigm

Neural networks are one of the most beautiful programming paradigms ever invented. In the conventional approach to programming, we tell the computer what to do, breaking big problems up into many small, precisely defined tasks that the computer can easily perform. By contrast, in a neural network we don't tell the computer how to solve our problem. Instead, it learns from observational data, figuring out its own solution to the problem at hand.

Michael Nielsen - What this book (Neural Networks and Deep Learning) is about

Unrelated to the quote, note that Nielsen's book is licensed under CC BY-NC, so one can build on it and redistribute non-commercially.

2018-04-30 - google-search-not-ai

But, users have learned to accommodate to Google not the other way around. We know what kinds of things we can type into Google and what we can't and we keep our searches to things that Google is likely to help with. We know we are looking for texts and not answers to start a conversation with an entity that knows what we really need to talk about. People learn from conversation and Google can't have one. It can pretend to have one using Siri but really those conversations tend to get tiresome when you are past asking about where to eat.

Roger Schank - Fraudulent claims made by IBM about Watson and AI

2018-04-06 - hacker-ethics

- Access to computers—and anything that might teach you something about the way the world works—should be unlimited and total. Always yield to the Hands-On Imperative!

- All information should be free.

- Mistrust Authority—Promote Decentralization.

- Hackers should be judged by their hacking, not bogus criteria such as degrees, age, race, or position.

- You can create art and beauty on a computer.

- Computers can change your life for the better.

The Hacker Ethic, Hackers: Heroes of Computer Revolution, by Steven Levy

2018-03-23 - static-site-generator

"Static site generators seem like music databases, in that everyone eventually writes their own crappy one that just barely scratches the itch they had (and I'm no exception)."

_david__@hackernews

So did I.